If you're using Airflow version 1.x, delete DAGs that are deployed on any Airflow environment (IR), you need to delete the DAGs in two different places. ( You can reset the username or password by editing the Airflow Integration runtime if needed) Sign in using the username-password provided during the Airflow Integration Runtime creation. Select on the Airflow environment created. To monitor the Airflow DAGs, sign in into Airflow UI with the earlier created username and password. You'll find the exact line numbers and the files, which have the issue through the Airflow UI. If you want Airflow to do more than run one of the example DAGs, you have to write Python code. This could happen if the DAG files contain any incompatible code. There is no user interface to create workflows (or directed acyclic graphs, DAGs, as they are called). Mitigation: Sign in into the Airflow UI and see if there are any DAG parsing errors. Airflow executes all Python code in the dagsfolder and loads any DAG objects that appear in globals ().

Problem: Imported DAGs don't show up when you sign in into the Airflow UI. In Airflow, DAGs are defined as Python code. One way to achieve this is by creating multiple DAG folders with lesser DAGs across multiple containers. Make sure your kubernetes cluster storage provisioner has persistent. Mitigation: Reduce the size of the imported DAGs with a single import. Airflow uses DAGs and Logs for persistence volumes with the Kubernetes Executor. Problem: DAG import is taking over 5 minutes The notification center (bell icon in ADF UI) can be used to track the import status updates. Importing DAGs could take a couple of minutes during Preview. Go to Manage hub -> Airflow (Preview) -> +New to create a new Airflow environment PrerequisitesĪzure subscription: If you don't have an Azure subscription, create a free account before you begin.Ĭreate or select an existing Data Factory in the region where the managed airflow preview is supported. The following steps set up and configure your Managed Airflow environment.

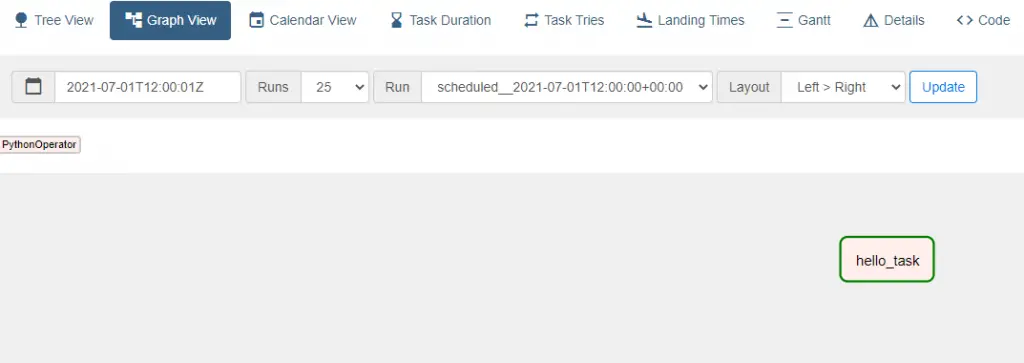

You can launch the Airflow UI from ADF using a command line interface (CLI) or a software development kit (SDK) to manage your DAGs.

To use this feature, you need to provide your DAGs and plugins in Azure Blob Storage. Pycharms documentation on this subject should show you how to create an appropriate 'Python Remote Debug' configuration. You should be able to use the same method, even if youre running airflow directly on localhost. Managed Airflow in Azure Data Factory uses Python-based Directed Acyclic Graphs (DAGs) to run your orchestration workflows. I debug airflow test dagid taskid, run on a vagrant machine, using P圜harm. Documentation and more tutorials for Airflow can be found on the Apache Airflow Documentation or Community pages. Managed Airflow for Azure Data Factory relies on the open source Apache Airflow application.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed